Google Analytics & API

Google Tag Manager

CRM integration

End-to-end analytics

Google Data Studio

Tableau

Power BI

UI/UX

A|B testing

Checkout journey optimization

Sales automation

UI/UX

A|B testing

Checkout journey optimization

Sales automation

We’ve got 7 years of web-measurement experience under the hood.

Our web-measurement story spans from the trenches of Google customer support centre, where we understood how under-estimated web-measurement is among SMBs.

One day we decided to leave the corporate boat and fix this.

Since then:

Tracking setups

Dashboards made

Happy clients

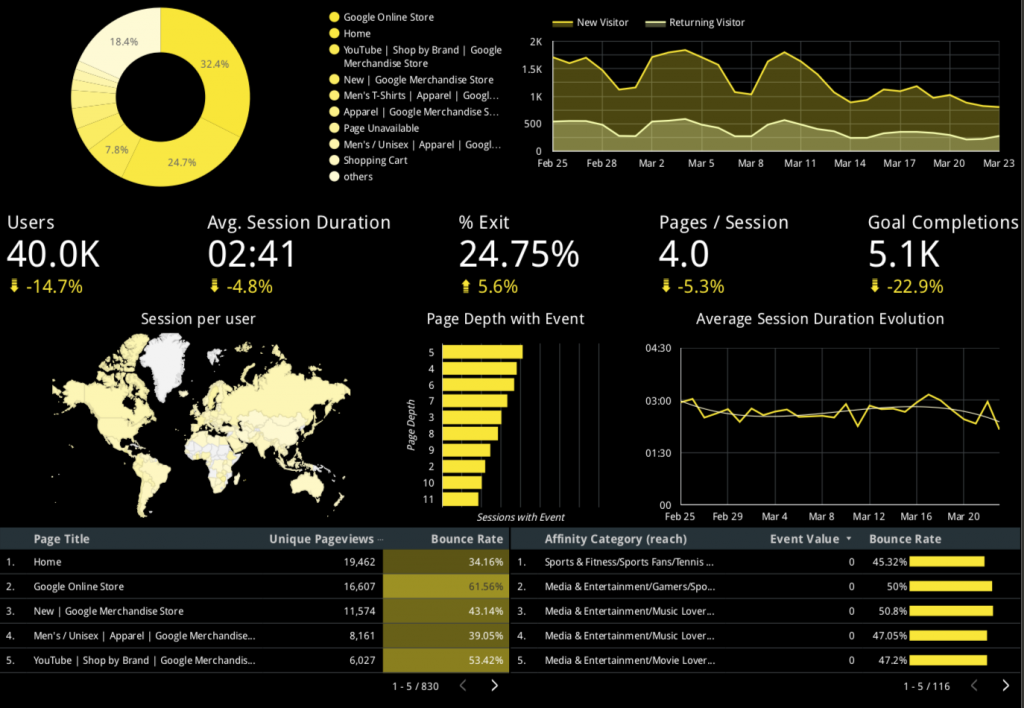

End-to-end analytics – we setup a tracking infrastructure that allows to analyze all your customer’s touch-points on a journey to conversion. We aim to bring together the data about user behavior, ad spend, completed purchases with the data about offline sales, micro-conversion (e.g. phone calls and newsletters), in a single system. This allows us to evaluate the performance of all the marketing channels using attribution models, and make proper decisions based on the complite data.

Our major customers are coming from:

We do your website, marketing channels due-dilligence and collate it with your business objectives.

Project implementation plan broken into checklists, time estimates and task ownership. Expectations are important.

Your developers will be delighted with instructions. We also know how to properly communicate with those aliens.

From the first visit on the website to their offline behavior, color of the eyes and more. We're curious folks.

Be it a complex marketing funnel, lead scoring, ROAS report or seemingly unrelated data. We'll make sense of it.

Yes, sometimes your website sucks. We're the ones who will tell the true and fix things for better.

Was happy to have a business with Denis. We were lucky to benefit from his growth hacker’s mindset and excellent google ads, google analytics, google tag manager skills. Appreciate clear communication and ability to deliver on time. Will continue cooperation after some business model reshuffling. Looking forward.

Think above is fake?

You’re right, it always worth checking our Upwork profile

Senior PPC specialist. Makes your advertising campaigns performance a breeze. “ROAS” is his mantra.

Depends…

Basic setup ranging from 500$ for a simple Shopify migration to 1000$+ for the complex cases.

Advanced implementations Usually values 2000$+.

As an initial step we scope out every project, plan every stage with approximate evaluation of each action item and owners of this task.

We rather call ourselves a professional community. Having 7 full time rockstars onboard (mainly data engineers, data scientists and digital marketers, UX/Ui specialists) we also engage reliable colleagues with skills ranging from software development to video production.

Being a small team, we do our best to make sure we enjoy working with our customers. We always own what we do, think about your business strategically and we’re creative in things above. This allows us to establish relationships that some people call partnership. Our experience allows us to define the criteria that tell us about a potential fruitful cooperation:

In case you recon that mentioned above is about your company – we’ll be pleased to hop on a call and begin the space journey.

Case:

EU-based ecommerce jewellery business BigQuey integration and machine learning.

Context:

Our customer is an e-commerce website with headquarters in Rotterdam (Netherlands) is a pioneer on a jewelry market that allows its customers to create the jewel of their dream in minutes thanks to the multitude of customization options and powerful 3D design techniques. There’s also an option to order a 3D model of the jewel before it goes to production.

Tools/approaches used:

Main complexities of the project:

Project requirements:

Project implementation:

Once we started implementation it became obvious that we won’t be able to receive product details (e.g. stones, metals, sizes, models) from the SaaS provider on frontend/backend. Which was a major blocker.

Therefore we moved forward with a workaround:

MERGING GA4 DATA WITH CRM AND OTHER DATA SOURCES

The next step was to assign particular ecommerce actions to the specific customers and information about them in the CRM. This was organized in a following way:

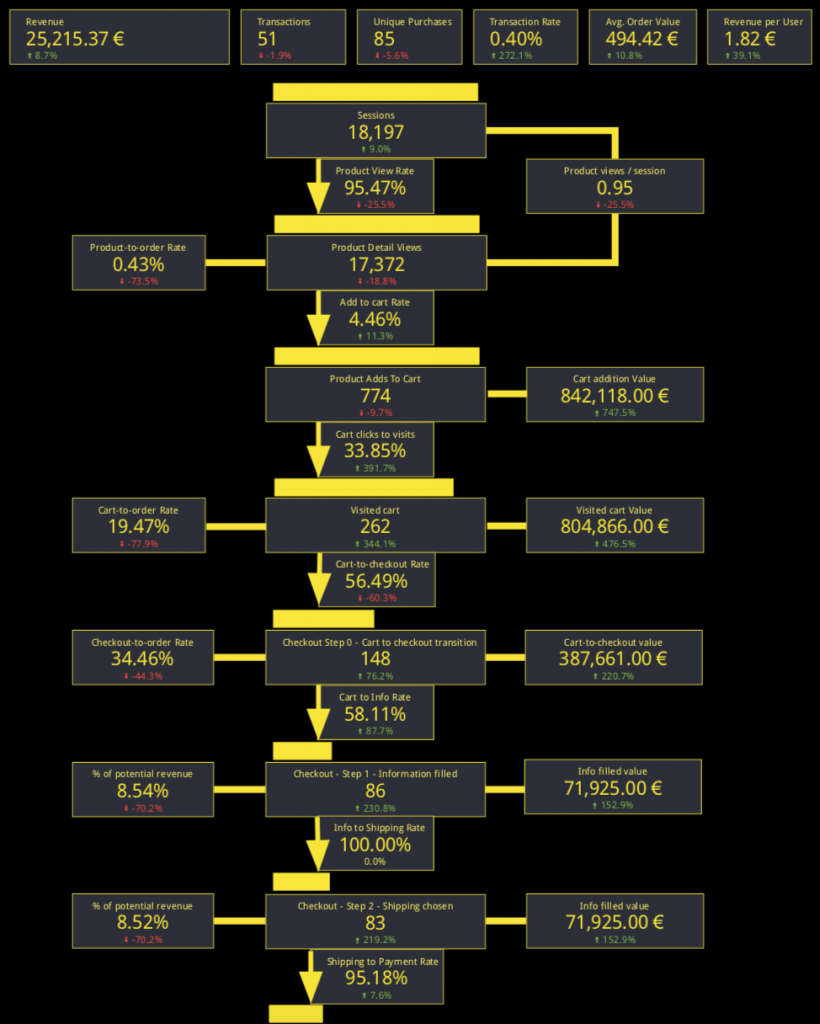

Our next step was to visualize the ecommerce funnel with as many details as possible:

MACHINE LEARNING IN BIGQUERY

Next, we moved to the most complex part – machine learning models In BigQuery ML.

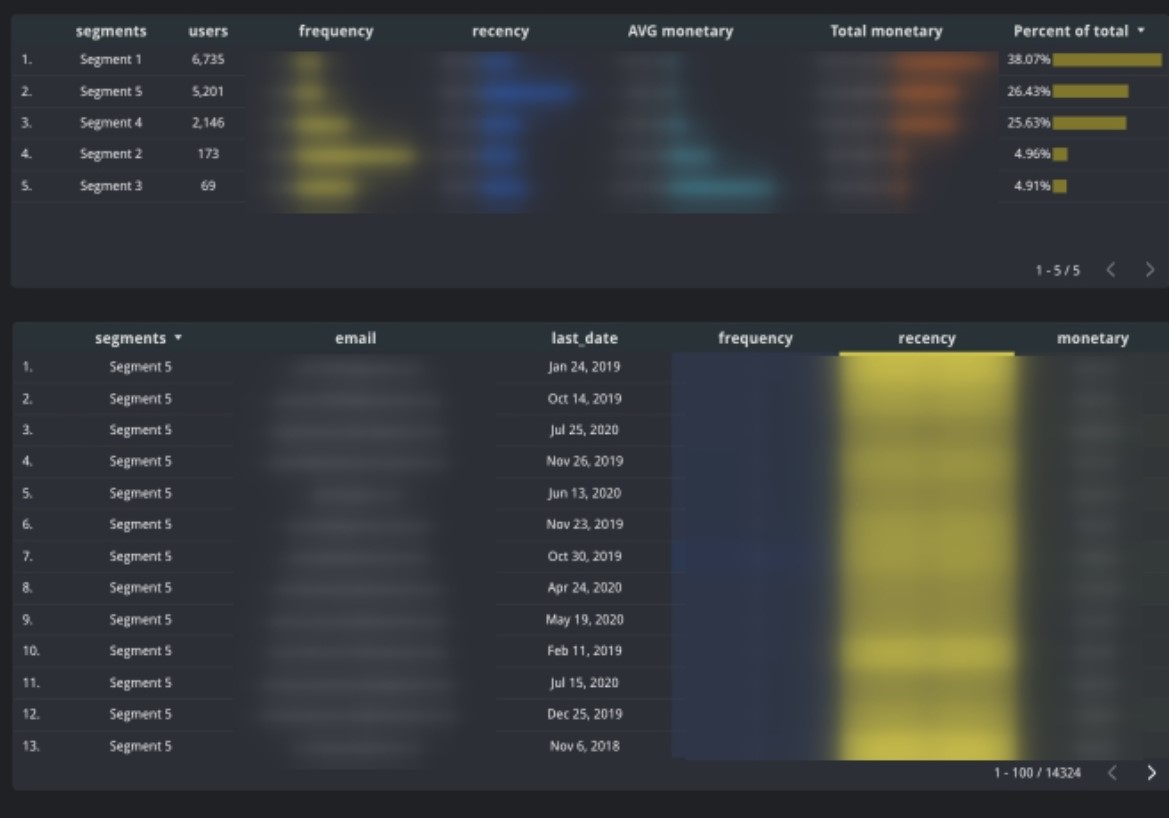

The first task was to segment our customer base into cohorts based on the recency, frequency and monetary value of their purchases. We employed a k-means model that clearly splitted the customer base into 5 distinct segments:

This instantly allowed business owners come to multiple conclusions:

Based on this we were able to formulate multiple hypothesis that needed validation. Our final actions made us to come up with the following solutions:

Result:

Result:

CONCLUSION

We’re continuing working with this customer on many fronts: CRO, sales automation, UX/UI, SEO and other aspects of business development. We perceive this case as a pivotal point in our relations due to couple of reasons:

Case:

US-based multiplatform videostreaming service user-retention optimisation.

Context:

Our customer is the US-based streaming service that functions mainly in Latin America and generates their revenue from the advertising embedded in the video stream. The platform is functioning as a website, iOS, Android apps, Samsung TV, Roku TV channel.

Tools/approaches used:

Main challenges of the project:

Project requirements:

Project implementation:

Due to the high complexity of the implementation it became obvious that data architecture mapping had to take place, so we could specify all the peculiarities of the current data infrastructure and each platform in details. Therefore we drafted current schema and added the pieces of the dataflow that we considered to be inevitable. See result bellow:

This allowed us to understand that before mapping the events that are gonna be sent to Google Analytics 4, we need to align event schema, so it could be universal for all the platforms. Next aspect was that some events are gonna be sent client side and on some platforms via the server-side implementation (Measurement Protocol for GA4) – this needed separate documentation and full alignment with the backend developers teams (each platform had a separate dev team). Understanding of the overall picture saved us a lot of time and money.

It took us couple of days to map the events and create corresponding documentation for each platform and discuss do’s and obstacles that current implementation had. Fo instance, one of the major surprises for us was the fact that some TV platforms didn’t have integration with the firebase SDK or that current advertiswing platform didn’t have API that would allow to export monetization data directly to BigQuery. Therefore we had to move forward with some workarounds.

Some of the platforms’ (e.g. Roku) peculiarities forced us implementing custom build SDKs that (after some adjustments from our side) were allowing us to stream events to Firebase and consequently import to GA4. In some cases we were able to setup direct server-to-server connection via the Measurement Protocol functionality. The rest was implemented via the server-side GTM implementation. Solutions were not coming frictionally therefore we understood that there’s no reason we should be waiting for all of the platforms implementation and gradually started pulling data into the BigQuery.

MAIN BUSINESS METRICS

Streaming business has a range of indicators that tell the story about the overall business sustainability. One once hand you have to keep an eye on engagement metrics, such as Average Viewership Time, Amount of Video content pieces watched, Video Viewership Depth etc. On the other hand, you have to make sure you’re building a loyal userbase – this traffic has high ROAS and increases the virality of your platform – this allows to grow organically.

ENGAGEMENT METRICS

Main KPIs were displayed in a series of scorecards with the engagement visualization that let customer understand video watch dynamics in a form of funnel.

Another piece on information was the pause/play click data and engagement stats (total watchtime, average watch percentile, # of user) by film/genre/author that would help to display only relevant content on movie lists.

RETENTION METRICS

As traffic on the website is mainly not logged in we’ve used user_pseudo_id as a main matching point for the retention matrix definition since the majority of traffic was coming from apps and TV platforms. Retention/churn matrix was uses in order to spot the bottlenecks in terms of LTV of the audience and find ways to resurrect it.

Although Retention / Churn Matrix allowed to understand weekly shifts in terms of retention, we still wanted to go more granular and look at the overall picture in terms of Retained, Churned, Resurrected and New Users.

This allowed to formulate indept metrics that Top Level KPIs such as Retention, Churn and Resurrection Rates and analyze changes on a product level on weekly and monthly basis.

This report was extensively used by the marketing team from the email automation point of view – they were able to understand what are the critical days in the user lifecycle and reengage them with the content that suited their interests.

MACHINE LEARNING IN BIGQUERY

Anomaly Spotting

Due to the high server load customer’s infrastructure was experiencing downtimes and as a consequence loss of ad revenue. We’ve designed an anomaly spotting tool that allowed us capturing statistical deviations in traffic and make troubleshooting easier. Model had been taking historical data from the previous period and was considering platform specific behavioral patterns.

RESULTS

While being able to measure Retention / Resurrection / Churn rates we were able to help customer’s marketing team to set the benchmarks for those metrics and increase Retention rate by 15% over 4 months by recommending and helping in implementation of:

One of the goals was to maximize ad revenue by:

CONCLUSION

As we can see engagement and retention metrics are usually quite intertwined. One of the biggest leasons learned in this project that before implementation stage one should thoroughly check the data infrastructure, especially if it comes to multiplatform business-models. It is also outmost important to have an idea on how third party vendors (in our case it is the ad distribution network) are interacting with customer’s servers and front-end. Happily, for our project goals we were able to come up with a workaround.

Other instance of “what could be done better” is starting with user segmentation first. We could have done an ML-based segmentation of the user-base – this would give us a better idea about different user segments behavioral patters, hence lot’s of insights of the critical data points that should be included into the dashboards and ongoing monitoring. This is something we’re working atm on this project.

Case:

UK-based waste-management company leadgen optimisation.

Context:

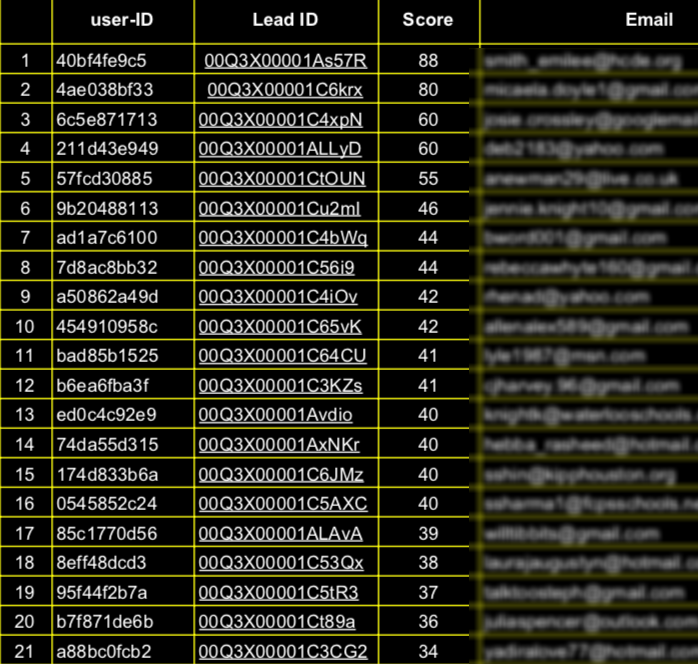

Our customer is a waste-management company in London that generates leads mainly through Google Ads. We were asked to setup tracking for repeating only customers that could be defined based on the range of answers in the 7-step lead form. They would also ask to help them prioritize one leads over others based on the information that comes from their online and offline activities.

Tools/approaches used:

Main complexities of the project:

Project requirements:

Project implementation:

First and foremost we designed the overall data architecture that would make it clear for the customer and the team on how setup should be theoretically working and adjust it while we’ll be facing some specific blockers on a go. Fortunately, it stayed exactly the way we designed it in the beginning.

GA4 SETUP AND EVENT IMPLEMENTATION

For the beginning we wanted to make sure we get the maximum events and parameters collected in GA4 and consequently in BigQuery from the online journey. The implementation was made via the server-side GTM in order to minimize data-loss that is usually caused by ad blockers and other factors. One of the best ways to track multi-step forms is to visualize them as a funnel – this allows to optimise them while spotting the bottle-necks:

In this case it was important for the customer to track one-off and repeating requests separately.

Based on the funnel above, one has a possibility to see the transfer rate from step-to-step and final conversion rate of the form.

The other aspect that was important during the implementation is to make sure that all the possible actions and metrics would be captured properly, e.g. time spent on website, scrolls, widget interactions, chat activity, form interactions, # of visits before the conversion, exact location of customer etc. This would in the future be the basis for the leadscoring automation we came up with.

One more important aspect was gclid extraction, since we needed leadsores to be sent back to the Google Ads accounts for performance optimisation.

MERGING GA4 DATA WITH CRM AND OTHER DATA SOURCES

The next step was to assign particular ecommerce actions to the specific customers and information about them in the CRM – this way we’ve got the offline conversions information that could be further fed to the machine learning engine and Google Ads account. This was organized in a following way:

This gave us a good understanding about the characteristics of the most perspective customers but we still needed to link their exceptional performance to the online behavior.

MACHINE LEARNING IN BIGQUERY

Next, we moved to the most interesting part – machine learning models In BigQuery ML.

On the first stage we needed to understand which features (events and parameters) are the best indicators of users’ convertability. Therefore we ran four classification models (decision tree, random forest, xgboost and Kneighbours) that would do their job with a different extend of success. Results showed us that the most impact on conversion rate comes from the following factors: geographical location (based on zipcode), time spent on website, # of visit made by the user, commercial/residential option in the form, interaction with Q&A section of the website, time spent on form filling, device type and model etc.

On the second stage, we feed the results from the previous one to the logistic regression model – this allows us to understand what is the probability of a user to convert on session level. Once the session happened, we’re sending the score to the advertising account. This allows advertising accounts to learn faster from the traffic that it generates in comparison to the classical conversion attribution model, hence optimise performance more efficiently.

Result:

CONCLUSION

While leadgeneration businesses are usually having a higher conversion into the lead rate than ecommerce businesses there is a factor that might influence the machine learning capacity to generate stable and consistent results – which is the conversion into the customer. This aspect includes 2 variables. Firstly, the information about the final conversions might get into the system later due to the long sales cycle, so one should be really careful, while deciding to implement machine learning models into their marketing stack. Other issue might be connected with CRM and sales people in place who might cause disruption in a data flow due to undisciplined approach towards CRM maintenance and updates or simply sell less due to multiple objective and subjective factors. Happily, in this case neither of the factors took place.